There is a potential irony in South Africa’s now-withdrawn draft artificial intelligence policy, and it may not be the one everyone thinks it is. The obvious joke is that the Department of Communications and Digital Technologies used AI to write an AI policy, and then AI did what AI does when unsupervised: invented stuff. But actually, the department’s problem is not that it used AI to build AI policy, as ironic as that is. Its real mistake may have been not using AI enough.

Had it done so, it might have discovered that there is actually quite a lot of real, useful, South African and African research on AI policy already out there. Not hallucinated research. Real research. With authors. Journals. The whole boring, magnificent apparatus of actual scholarship.

This, alas, was not the path chosen.

The policy was withdrawn after six, perhaps seven fictitious sources were found in its 67-item reference list. Minister Solly Malatsi said the “most plausible explanation” was that AI-generated citations were included without proper verification, and that the lapse compromised the draft’s credibility and integrity. It is hard to launch a national AI policy while inadvertently demonstrating one of AI's central risks.

The interesting thing is not merely that AI hallucinated. Of course it did.

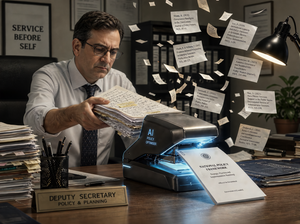

The interesting thing is that the hallucination was allowed into a formal government policy document after passing through the hands of human beings who were themselves writing about the need for human oversight of AI. This is not so much irony as a practical demonstration module. Launching a national AI policy while accidentally demonstrating one of the central risks of AI is a bit like launching a national fire-safety plan by burning down the fire station.

The weird thing is that AI sceptics have been warning about this for years. Emily Bender and her co-authors gave the world the immortal phrase “stochastic parrot” to describe large language models that assemble plausible language without necessarily understanding meaning. Ted Chiang, with even more literary elegance, called ChatGPT “a blurry JPEG of the Web”. The point in both cases is not that these tools are useless. They are often astonishingly useful. The point is that they are not authorities. They are compression engines with manners.

And this is precisely why the policy scandal feels less like a technology failure than an institutional one.

The likely sequence is easy enough to imagine, though impossible to prove without drafts, prompts, and version histories. There was probably a policy text already in motion, heavy with the familiar furniture of modern public administration: governance frameworks, ethical principles, risk categories, institutional arrangements, stakeholder processes, inclusion, transformation, sovereignty, monitoring, oversight and that most South African of policy instincts, the creation of new oversight bodies. Then, somewhere along the line, someone seemed to have asked AI, or an AI-assisted process, to supply academic support for ideas that were already there.

This is the wrong way round, obvs, though hardly unusual. The official method of policy-making is supposed to be: investigate the evidence, identify the problem, test options, and then propose policy. The unofficial method, more common than anyone admits, is: decide what you already want to say, consult just enough people to make the minutes respectable, and then hunt for references to make the conclusion look less lonely. In the old days, this produced tendentious literature reviews. In the age of AI, it produces fake journals.

That is why the fake citations are so revealing. Interestingly, none of the citations is specifically linked to particular claims or ideas in the body of the document, which is surely a fault in itself. A bibliography is not meant to be decorative shrubbery around the policy mansion. It is meant to show which claim rests on which evidence. The result is the strange sensation of a document that knows the language of evidence, but not always the discipline of evidence.

Just as an experiment, I asked ChatGPT to suggest published academic papers that might have supported the broad policy framework suggested in the draft: it came up with three pages of references! The department did not need to invent a South African-sounding scholarship. It could have cited actual South African scholarship.

ChatGPT actually went further, specifying real academic papers that might have more pertinently supported individual policy ideas than the made-up ones. How incredible is that?

What that shows, I suspect, is that the people drawing up the AI policy were not very familiar with how AI works. And wouldn’t that just be so typical of how a government department, particularly a South African government department, goes about making policy?

Still, the draft was not anti-AI, or useless, or even beyond repair. Its broad ambition was sensible enough: South Africa needs to think seriously about AI, because AI is not waiting politely outside the Cabinet room until the policy process is ready. It is already in classrooms, call centres, newsrooms, banks, law firms, hospitals, farms, advertising agencies and WhatsApp groups. It is writing first drafts, summarising documents, generating code, producing images, helping children with homework, and helping adults pretend they have read the attachments.

My own experience using AI, which I do more and more now, is that it's most useful not when it's asked to dream up something broad and open, but when it has something specific to work on. I almost always use AI to fact-check my writing, and it's usefully censorious and pedantic. It works best when it actually has some meat to chew on, whereas a broad and general question can give you quite generic results. But the other thing you notice as a user is just how fast it's improving. This all leads to another irony for the South Africa AI policy calamity: if they had used an advanced version of AI today, my guess is that the hallucinations might not have happened.

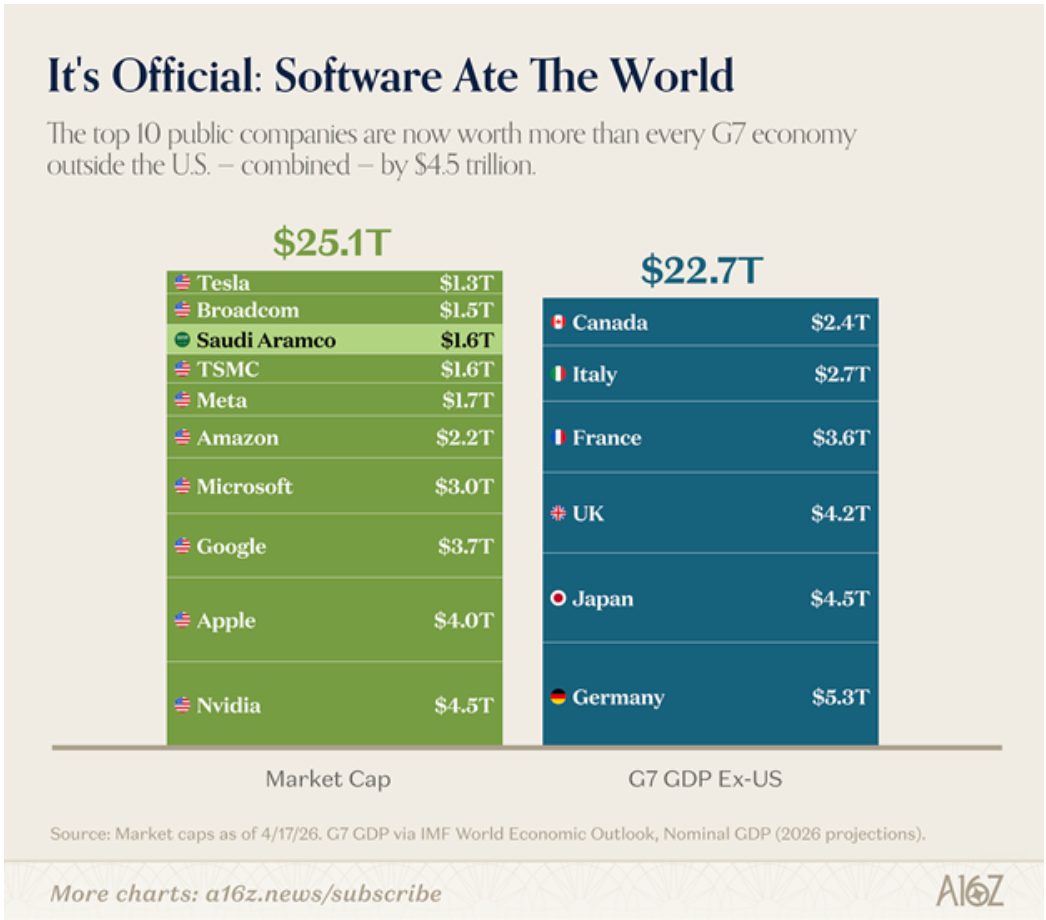

Marc Andreessen once said that “software is eating the world”. AI is now eating the software, or at least licking the plate. Check out this graphic (although note that GDP, which is a "flow" value, is not actually strictly comparable to market cap, which is an absolute value at a moment in time). But still illustrative.

The question is not whether South Africa (and other countries) participates in this economy, but whether it participates as a builder, a buyer, a rule-taker, a rule-maker, or merely a concerned observer with a subcommittee.

Tech investor Stafford Masie has written a useful open letter to the department, because it shifted the argument from the morality of AI, which is wide open and arguably an unanswerable question at this point, to the industrial reality of AI. His sharpest line was that South Africa “will not regulate its way into the AI economy” but must build its way into it. He also argued that the draft was too preoccupied with governance machinery before the country had committed properly to compute, infrastructure, electricity, incentives, skills and the actual conditions under which AI companies are built.

There is something wonderfully South African about approaching a technological revolution by first designing the complaints office. Before the data centres, before the GPUs, before the research clusters, before the procurement pathways, before the incentives, before the school-level AI literacy, before the local-language models, before the public-sector pilot projects that actually work, comes the ombud. We may not have the compute, but by heaven we shall have a complaints procedure.

Then there is the AI Insurance Superfund, modelled after the Road Accident Fund, to compensate individuals or entities harmed by AI-driven outcomes where liability is hard to determine. Surely this is a big red flag. The RAF is not exactly the poster child for nimble, solvent, trusted public compensation. But invoking the RAF as a model should have caused every policy reader in the room to sit upright, reach for a pencil, and write in the margin: “Are we quite sure about this?”

The lesson here is not that South Africa should abandon AI policy. Quite the opposite. The scandal might actually have inadvertently done the country a favour by exposing the exact problem the policy should solve - people and institutions are already using AI without enough knowledge, discipline or verification. The answer is not to retreat into embarrassment. The answer is to produce a better draft quickly.

The revised policy should be more ambitious, not less. It should be more empirical, not more defensive. It should be more open to builders, teachers, researchers, technologists, entrepreneurs, and users, not only regulators. It should recognise that the AI economy will not be summoned into existence by an authority, a board, an ombud and a fund, however beautifully gazetted.

The hallucinated references were embarrassing, yes. But embarrassment is underrated. It is one of the few remaining mechanisms by which institutions learn.

The department now has a rare opportunity: to show that it understands the technology better because it has been publicly bitten by it. The next draft should not sound as though AI was asked to endorse the government's existing habits.

It should sound like the government has finally asked AI, and the people who understand it, a better set of questions. 💥

From the department of Only the British ...

From the department of Nature's crucial dodgy fridge ...

From the department of Neanderthals being very neanderthal ...

Thanks for reading - please share if you have a friend (or enemy!) you think would value this blog and ask them to add their email in the block below - it's free for the time being. If the sign-up link doesn't appear, you'll find it on the site.

Till next time. 💥

Join the conversation